What is the long-term effect of using LLM chatbots for daily tasks? According to a study (DOI link) by Steven D Shaw and Gideon Nave of the University of Pennsylvania the observable effect is that of ‘cognitive surrender’, where users are seen to blindly accept the generated answers.

There has long been a struggle between those who feel that it’s fine for humans to rely on available technologies to make tasks like information recall and calculations easier, and those who insist that a human should be perfectly capable of doing such tasks without any assistance. Plato argued that reading and writing hurt our ability to memorize, and for the longest time it was deemed inappropriate for students to even consider taking one of those newfangled digital calculators into an exam, while now we have many arguing that using an ‘AI’ is the equivalent of using a calculator.

Yet as the authors succinctly point out, there’s a big difference between a digital calculator and one of these LLM-powered chatbots in how they affect human cognition, and it’s one that’s worth thinking about for yourself.

Surrender Versus Offloading

Cognitive offloading is the practice of shifting cognitive tasks to external aids, and it is thought to make learning complex tasks easier. In contrast to rote memorization of facts like dates of events and formulas, if we consider books to be an external memory storage device, then we can offload such precise memorization to their pages and only require from students that they are capable of efficiently finding information, as well as judging it on their merit.

An often misquoted anecdote here pertains to Albert Einstein, who was was once asked why he couldn’t cite the speed of sound from memory. To this he responded with a curt:

[I do not] carry such information in my mind since it is readily available in books. …The value of a college education is not the learning of many facts but the training of the mind to think.

Einstein is making the case for the benefits of cognitive offloading. Rote memorization does not enhance one’s cognition, and the ability to solve complicated equations and sums without so much as the use of pen and paper is fairly irrelevant when a slide rule and a digital calculator can offload all that work. As a benefit these devices tend to be more precise, faster and very accessible.

It is still important to have a ‘feeling’ for whether a calculation is correct, and one should never assume that what is written in a book is the absolute truth, and that is the key difference between cognitive offloading and “cognitive surrender”. If you type numbers into your calculator, and they seem off, and you re-type them to be sure, that’s cognitive offloading. If you don’t bother with the sniff-test, that’s cognitive surrender.

So are we using LLM chatbots as reference sources that we’ll think twice about, or is it something more?

External Cognition

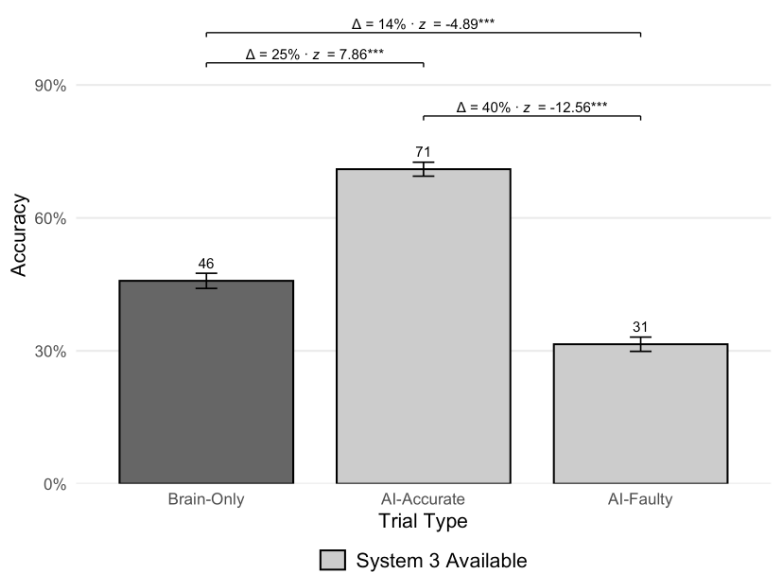

In the referenced study, Shaw et al. had three groups of volunteers take a standardized test, during which one group had to rely purely on their own wits, the second group could use an LLM chatbot which gave correct answers, while a third group also had access to this chatbot, but for them it gave wrong answers.

System 3 facilitates cognitive surrender. (Credit: Shaw et al., 2026)

Perhaps unsurprisingly, the test subjects used the chatbot quite a lot when available, with predictable results. In the ‘tri-system theory of cognition’ that Shaw et al. propose in the paper, the external cognitive system (‘System 3’) is that of the chatbot, whose output is clearly being accepted verbatim by a significant part of the test subjects. If said chatbot output is correct, this is great, but when it’s not, the test results massively suffer.

Where this is worrisome outside of such a self-contained tests is that people are exposed to endless amounts of faulty LLM-generated text, such as for example in the form of ‘AI summaries’ that search engines love to put front and center these days. Back in 2024, for example, Avram Piltch over at Tom’s Hardware compiled a amusing collection of such faulty outputs, some of which are easier to spot than others.

Ranging from the health effects of eating nose pickings to the speed difference between USB 3.2 Gen 1 and USB 3.0, to classics like adding Elmer’s glue to pizza sauce, it’s generally possible to find where on the internet a ridiculous claim was scraped from for the LLM’s dataset, while other types of faulty output are simply due to an LLM not possessing any intelligence or essentials like grasping what a context is.

Meanwhile other types of output are clearly confabulations, a fact which ought to be obvious to any intelligent human being, and yet it seems that so much of it passes whatever sniff test occurs within the cognitive capabilities of the average person.

Making Decisions

Anterior cingulate gyrus. (Credit: BodyParts3D, Wikimedia)

In the generally accepted model of cognitive decision making we see two internal systems: the first is the fast, intuitive and emotion-driven system. The second is the deliberate and analytical system, which tends to take a backseat to the first system in general, but could be said to be checking the homework of the first.

Although psychology is hardly an exact science, in the scientific fields of systems neuroscience and cognitive neuroscience we can find evidence for how decisions are made in the primate brain – including those of humans – with various cortices involved in the decision-making process. Fascinating here is the activity observed in the parietal cortex where a decision is not only formed, but also apparently assigned a degree of confidence.

Lesions in the anterior cingulate cortex (ACC) have been linked to impaired decision making and the arisal of impulse control issues, as the ACC appears to be instrumental in error detection. Issues in the ACC are thus more likely to result in faulty or flawed decisions and judgements passing by uncorrected. Incidentally, the ACC was found to be heavily affected by environmental tetraethyl lead contamination, underlying the theory that leaded gasoline was responsible for a surge in crime until this additive was discontinued.

With these findings in mind, we can thus rather confidently state that the emergence of LLM-based chatbots does not really add anything new, although it could be said to worsen existing flaws within the primate brain when it comes to said decision making. It looks like we give up our oversight role when LLMs are involved.

Irrational

Of course it’s not just LLMs. One could comfortably argue that the very reason why things like politics, idols, religion, and advertising exist — none of those could exist if people were completely rational beings whose cognitive processes belonged completely to themselves.

Still, it seems that LLM-based chatbots with their often very convincingly human-like and authoritative outputs have hit the same weaknesses that unscrupulous religious leaders and scammers exploit, with sometimes tragic consequences. Although it’s clear that believing some factual misinformation generated by a chatbot is a far cry from deciding to take fatal actions based on a dialog with said chatbot, it also highlights the importance of retaining your critical thinking skills.

While we can generally trust a calculator, an LLM-based chatbot is not nearly as reliable or benign. Caution and awareness of the risk of cognitive surrendering are thus well-warranted.