Reading a book about bowling is not the same as actually bowling. If that resonates with you and you want to learn more about large language models, check out the LLM From Scratch project. The hands-on workshop lets you use a Mac, Linux, or Windows PC running Python and common libraries like numpy and torch to build your own bare-bones LLM.

The project takes inspiration from nanoGPT but scales it down so you can train the model in around an hour on a typical computer. It will use an Apple or NVIDIA GPU, if available.

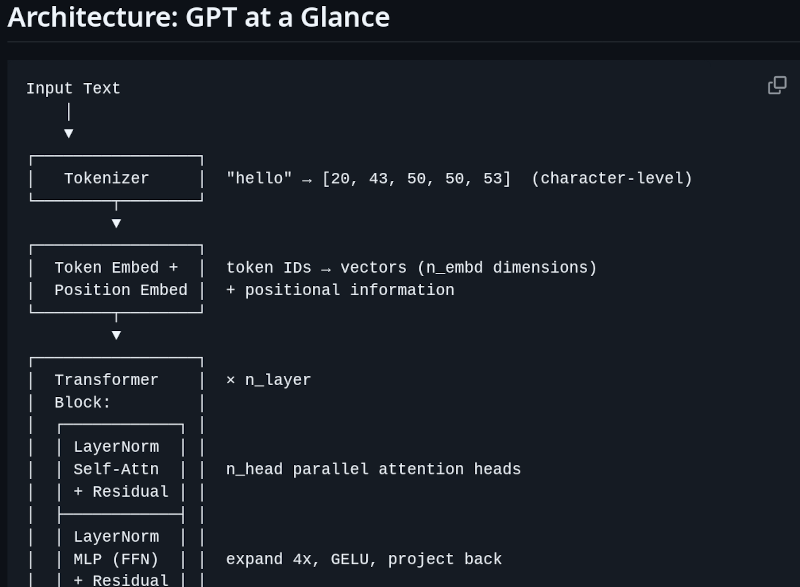

There are six parts to the workshop: the tokenizer, the transformer, the training loop, text generation, and then wrap-up parts where you train the model and find the best AI poet.

In addition, the references section has a number of interesting papers, including some you’ve probably seen before and some that you may have missed.

We like learning things from first principles when possible. If you aren’t keen on Python, you can also build your own LLM in a spreadsheet.